The Moment We’ve All Been Waiting For

At long last, it’s finally happened. Originality AI recently announced the ability to detect AI in languages other than English.

Yes, the top AI detector can now identify AI content in 14 additional languages, though not without nuances and limitations. But still, progress.

Today, to test Originality AI’s capabilities, I will run it against each of the 14 languages.

Here are the languages it can now detect:

- French

- Spanish

- Chinese (does not work)

- Vietnamese

- German

- Italian

- Japanese (does not work)

- Polish

- Russian

- Persian

- Dutch

- Greek

- Portuguese

- Turkish

I’m fluent in Russian, English, Polish and German (okay, okay, I’m bragging a little). In other languages, not so much. But ChatGPT and Claude 2 will help. Let’s see what Originality spits out.

The method: 14 tests each using ChatGPT (GPT-4) and Claude 2. That’s 28 tests total.

Turns out Originality AI does not actually work with all claimed languages and cannot detect ideograms, so fewer tests resulted.

My goal here is not to beat AI detection. Mainly, I want to show whether Originality AI can truly handle different languages.

The prompt is simple — ask both ChatGPT and Claude 2 to write like a 6th grader would understand. Same theme each time, different languages.

Note: I’m using ChatGPT Plus which provides access to GPT-4, one of the most advanced AI models.

Let’s go!

Key Findings:

- Overall accuracy for Claude 2 and GPT-4: 98%

- GPT-4 accuracy: 99%

- Claude 2 accuracy: 95%

- Number of actually functional languages including English: 13

- Originality AI cannot detect Chinese and Japanese

- No special maneuvers needed to test content in other languages — AI detection happens automatically

Putting Originality AI’s Multi-Language Detection to the Test

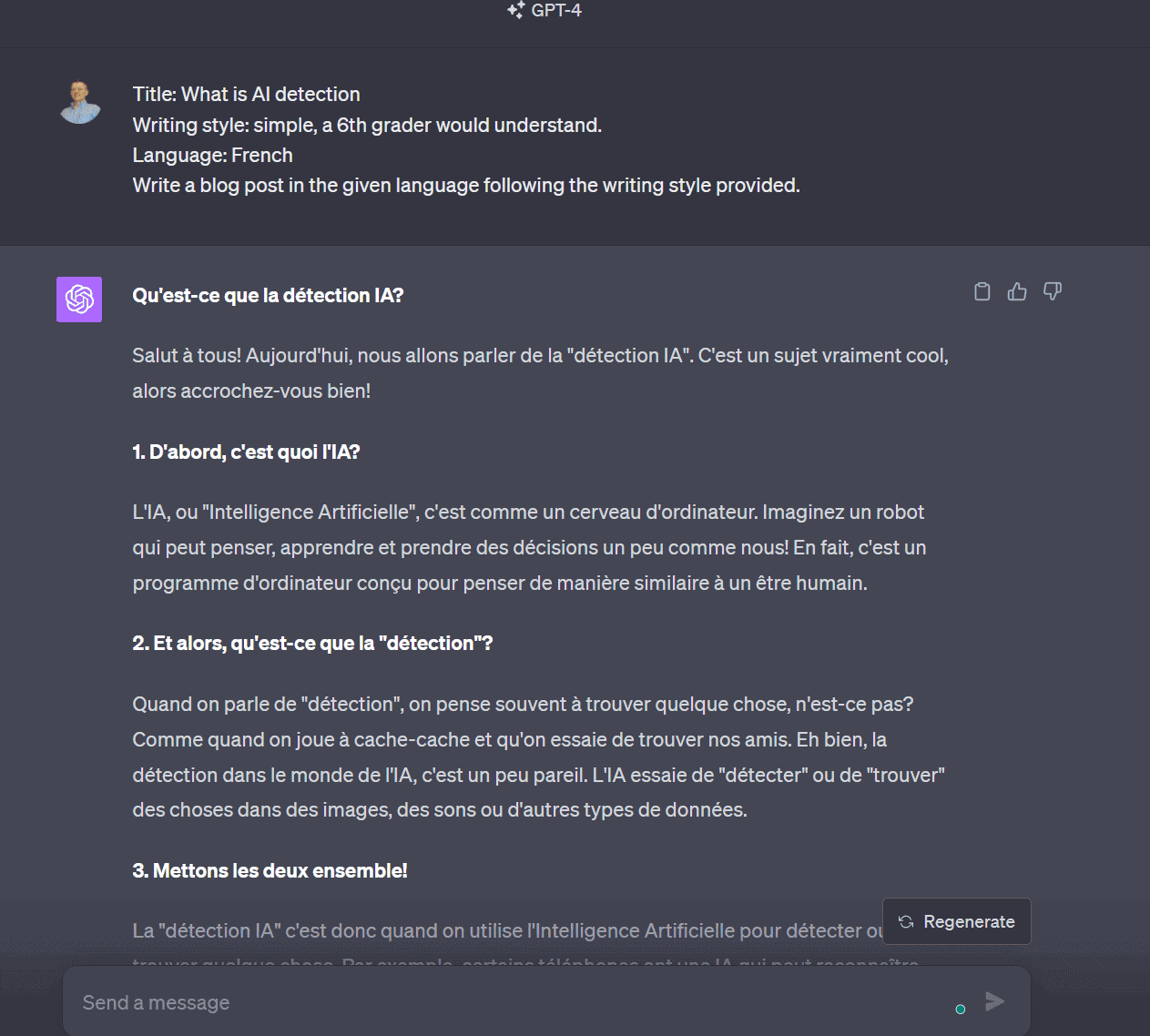

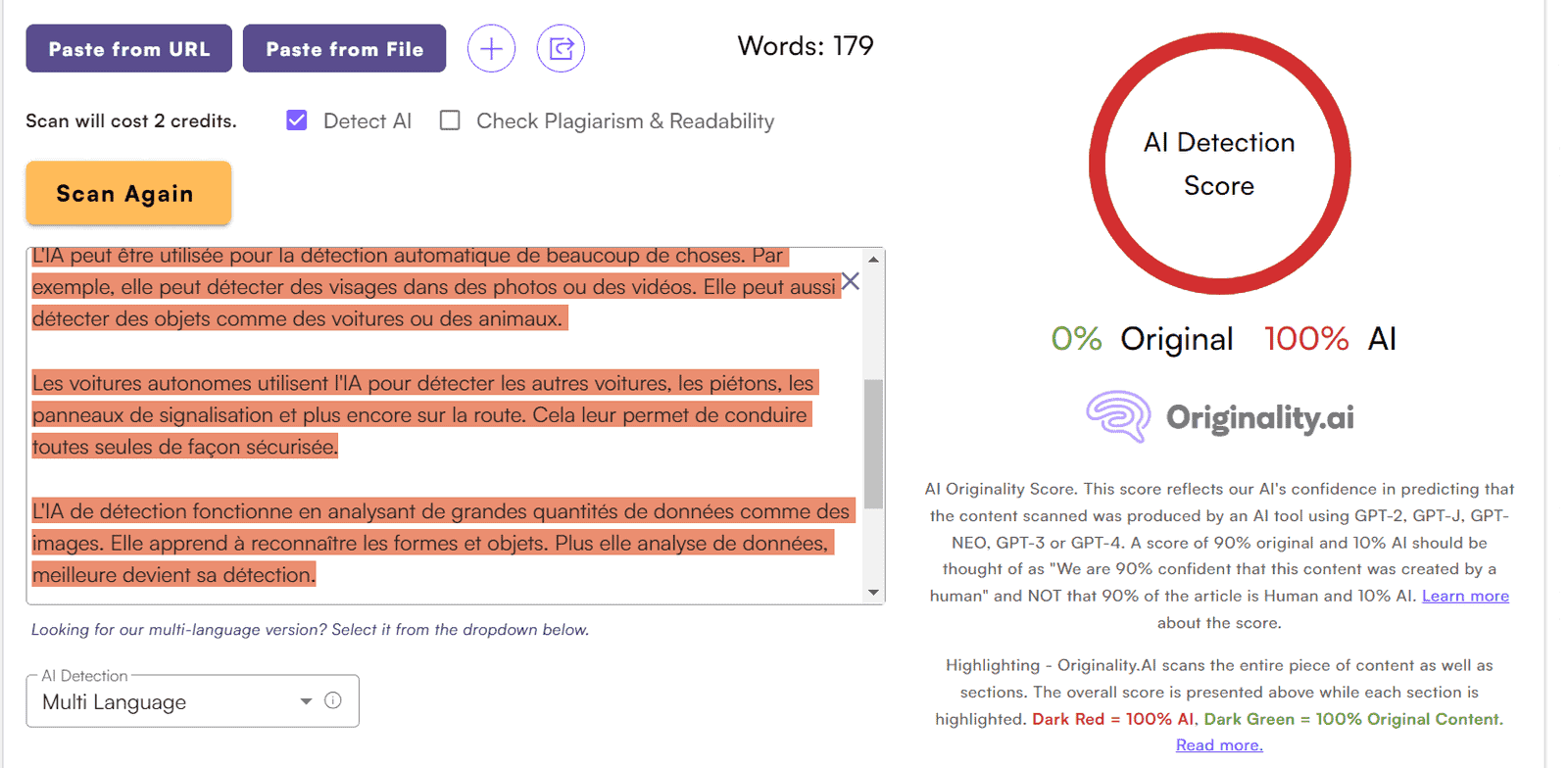

Test 1. French

We ask ChatGPT (GPT-4) to write an article about AI detection. To avoid repetition, again — same theme throughout, different languages.

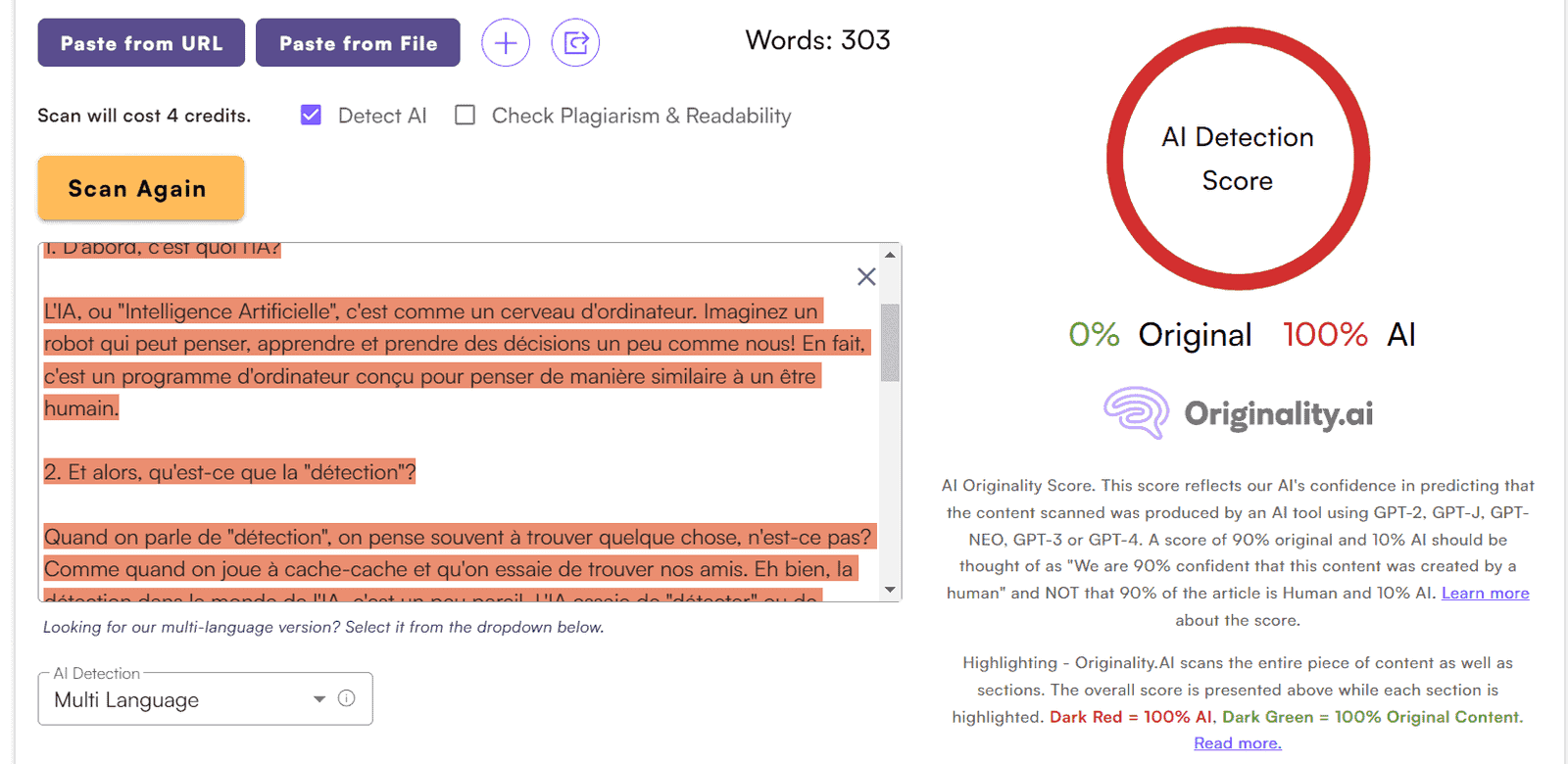

We check in Originality.AI:

Excellent. 100% AI content. Accurate.

Note the small but important detail: When you copy non-English content into Originality AI, nothing else is needed:

The platform automatically detects the new language and switches to Multi-Language mode:

Originality AI continues to impress.

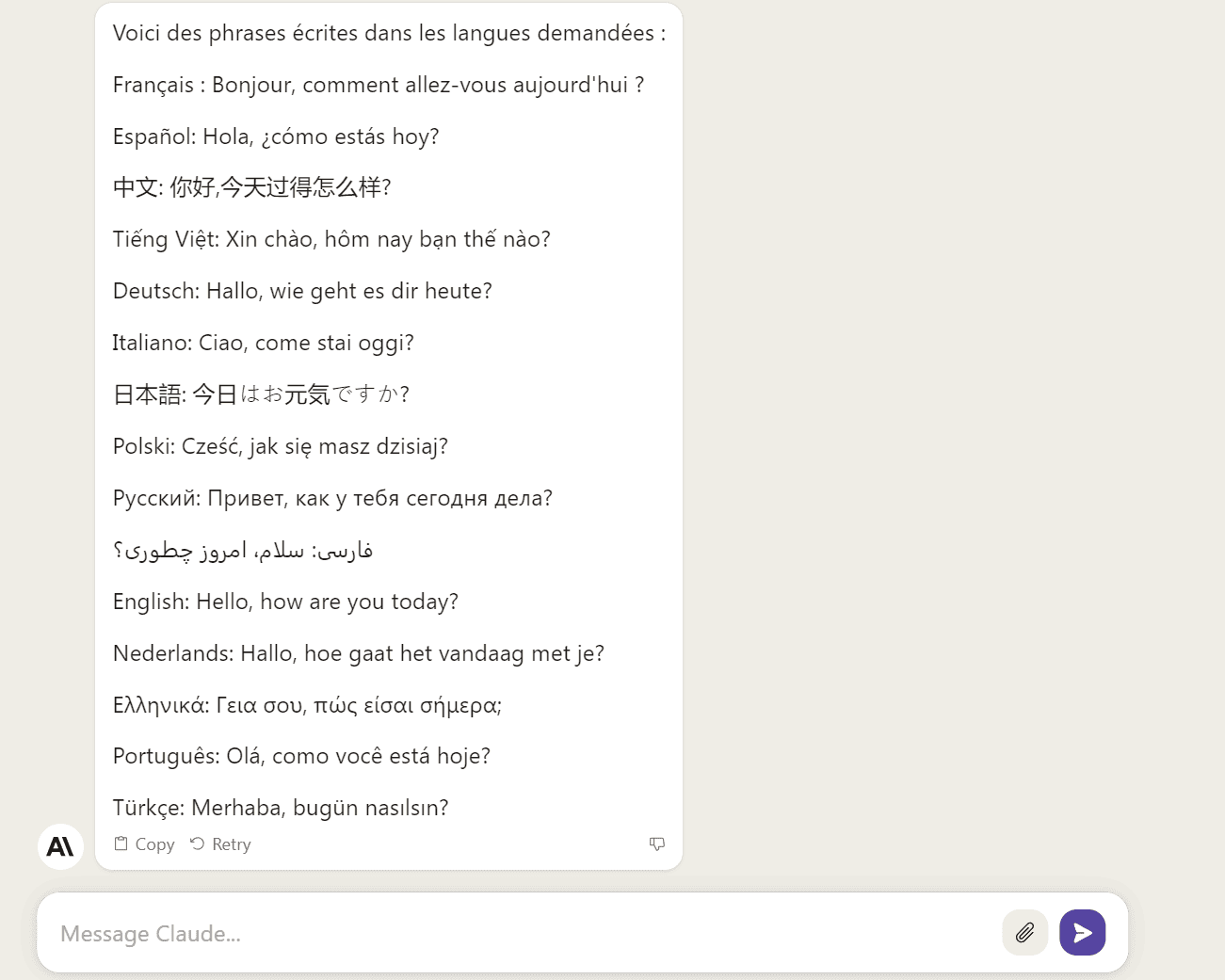

Now we try the same prompt in Claude 2.

By the way, for those unaware, Claude can converse in all these languages. I asked to confirm:

Great. Let’s test. Claude 2’s article:

Checking in Originality AI:

Perfect: 100% AI written content.

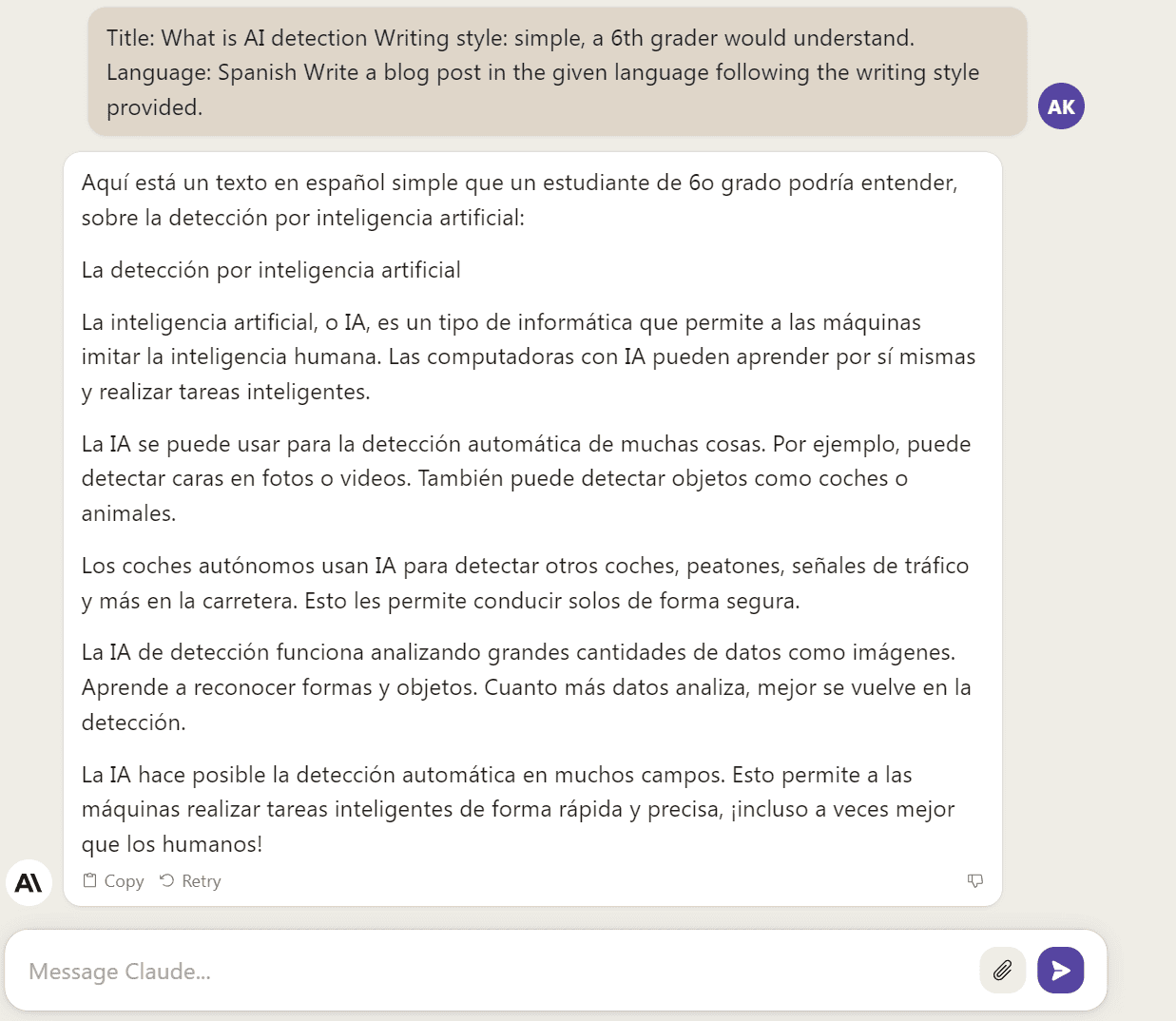

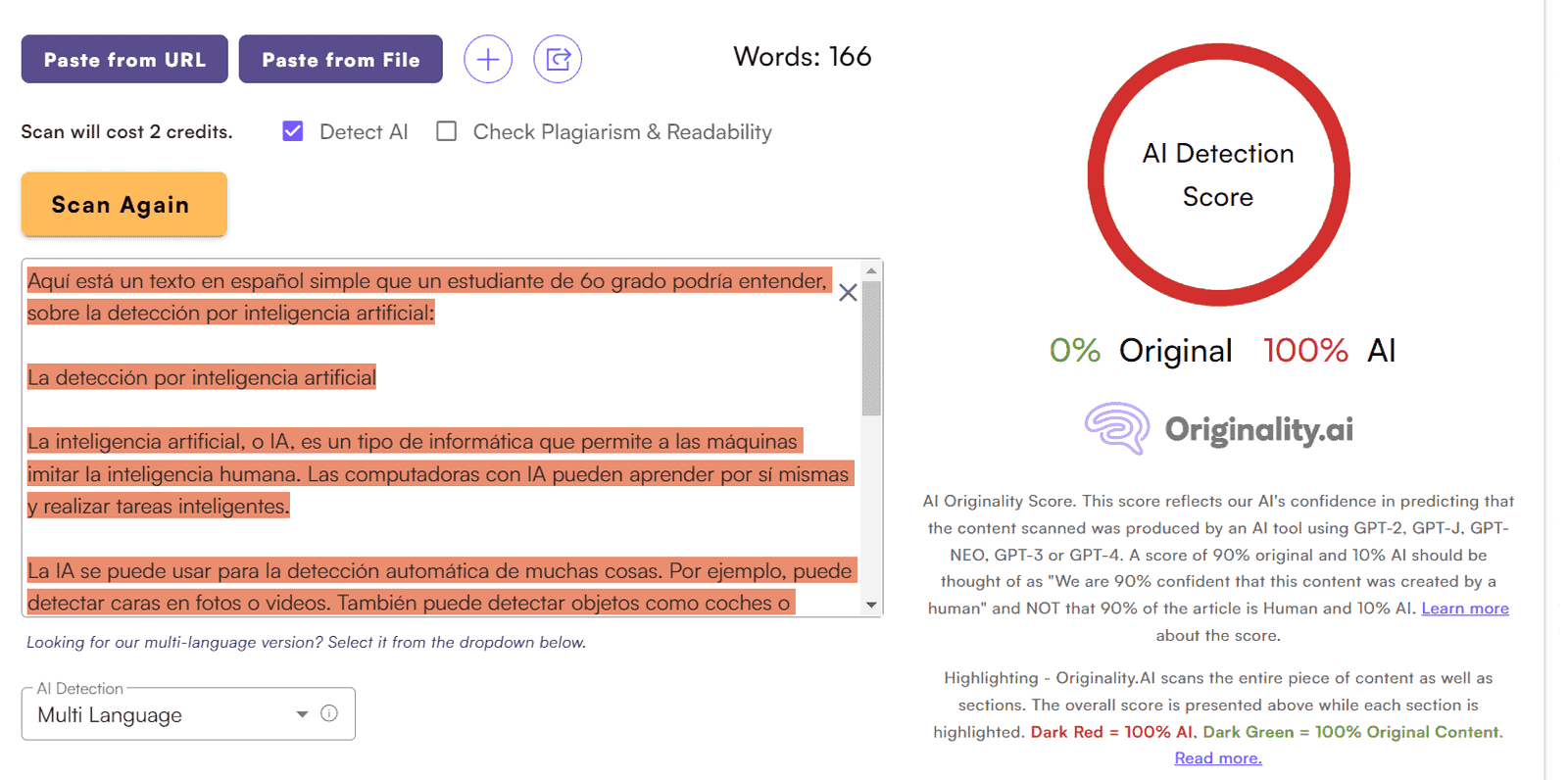

Test 2. Spanish

ChatGPT output on the same topic/prompt:

Originality AI verdict:

Excellent — 0% human content.

Claude 2:

Checking our favorite AI detector (not your fave yet? Really?):

So far, so good. Claude content again detected as 100% AI generated. No questions.

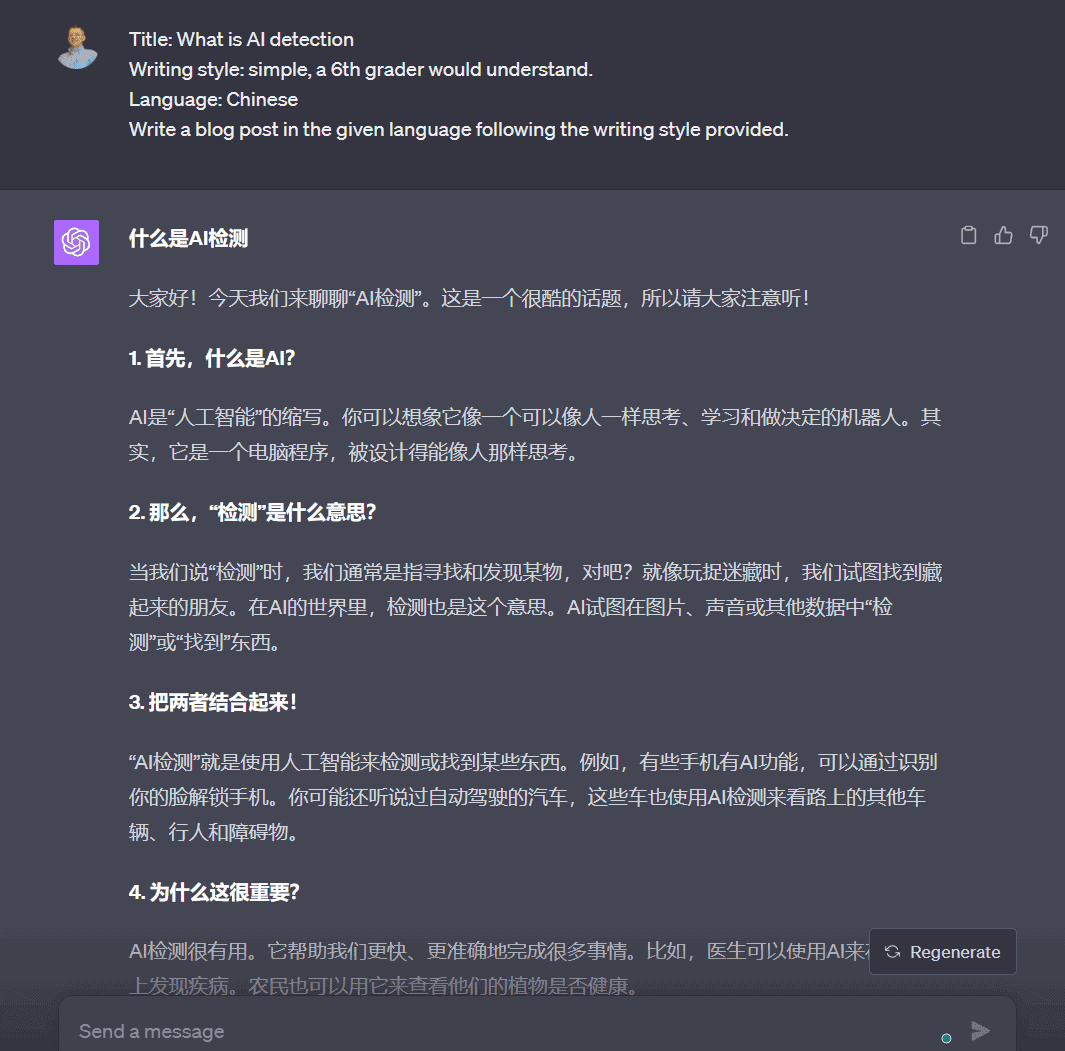

Test 3. Chinese

ChatGPT:

Originality AI says:

Yeah, nothing. Originality AI cannot read Chinese. Not good! Don’t boast capabilities you don’t have!

And this language wasn’t random. Look:

I didn’t draw this picture myself either; I took it from here.

Oh well. Baby steps. Same for Claude 2 output:

For now, Originality AI can only detect Latin letters and numbers. Moving on, minus one car.

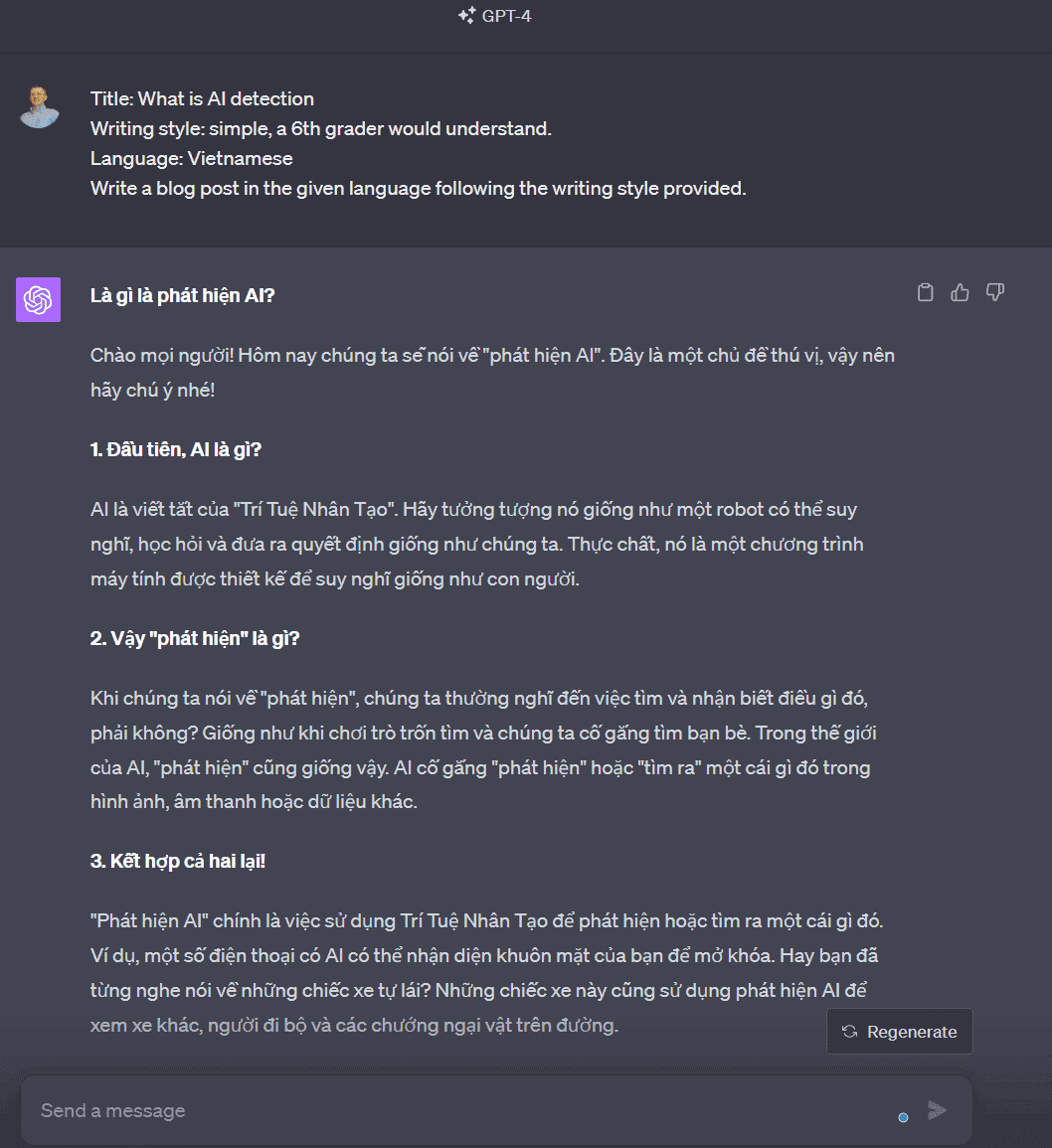

Test 4. Vietnamese

ChatGPT:

Checking Originality:

Good: 100% AI content.

Claude 2:

Checking:

Excellent — 100% AI content.

Test 5. German

ChatGPT:

Checking:

Claude 2:

Checking in Originality AI:

Marching on.

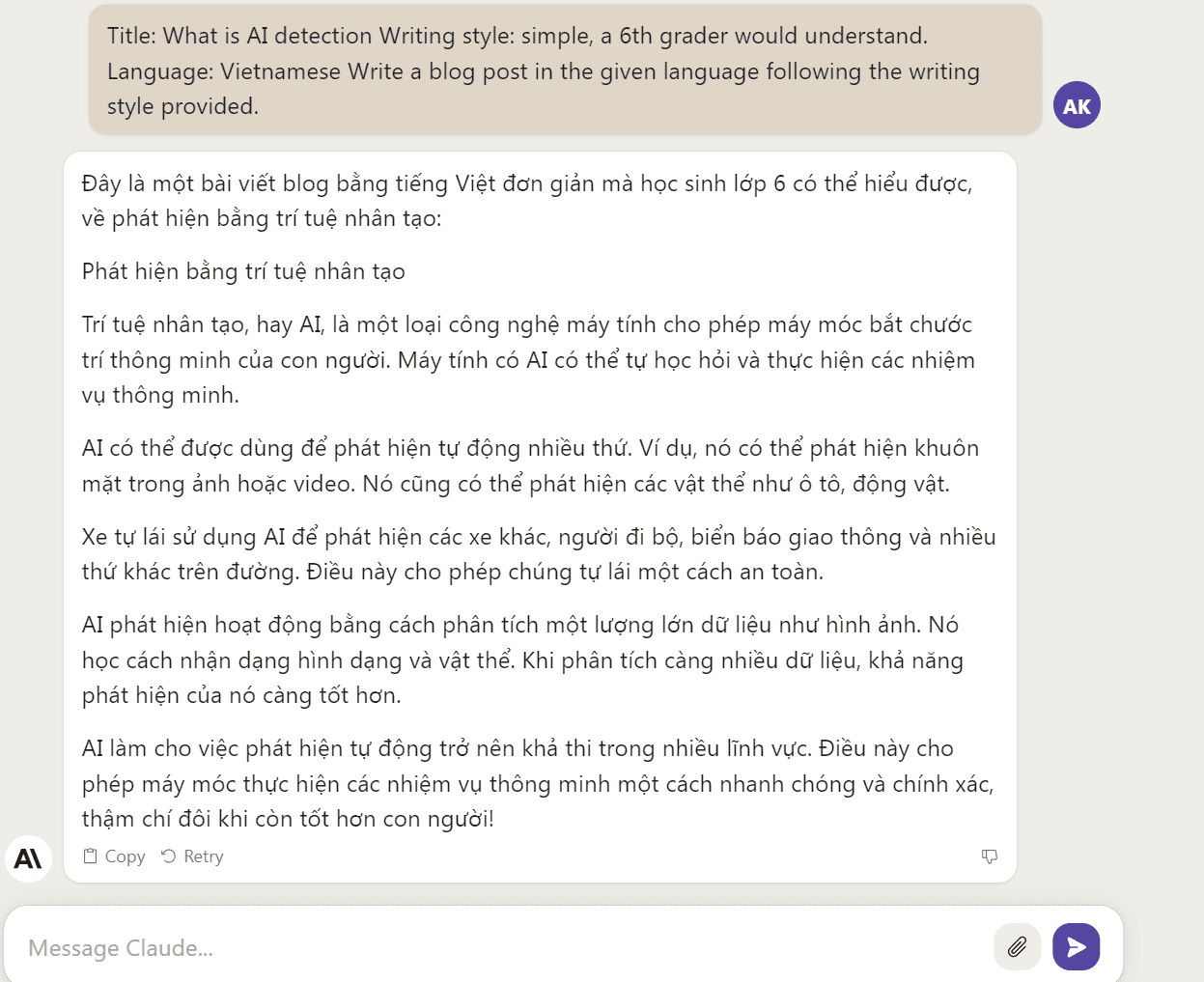

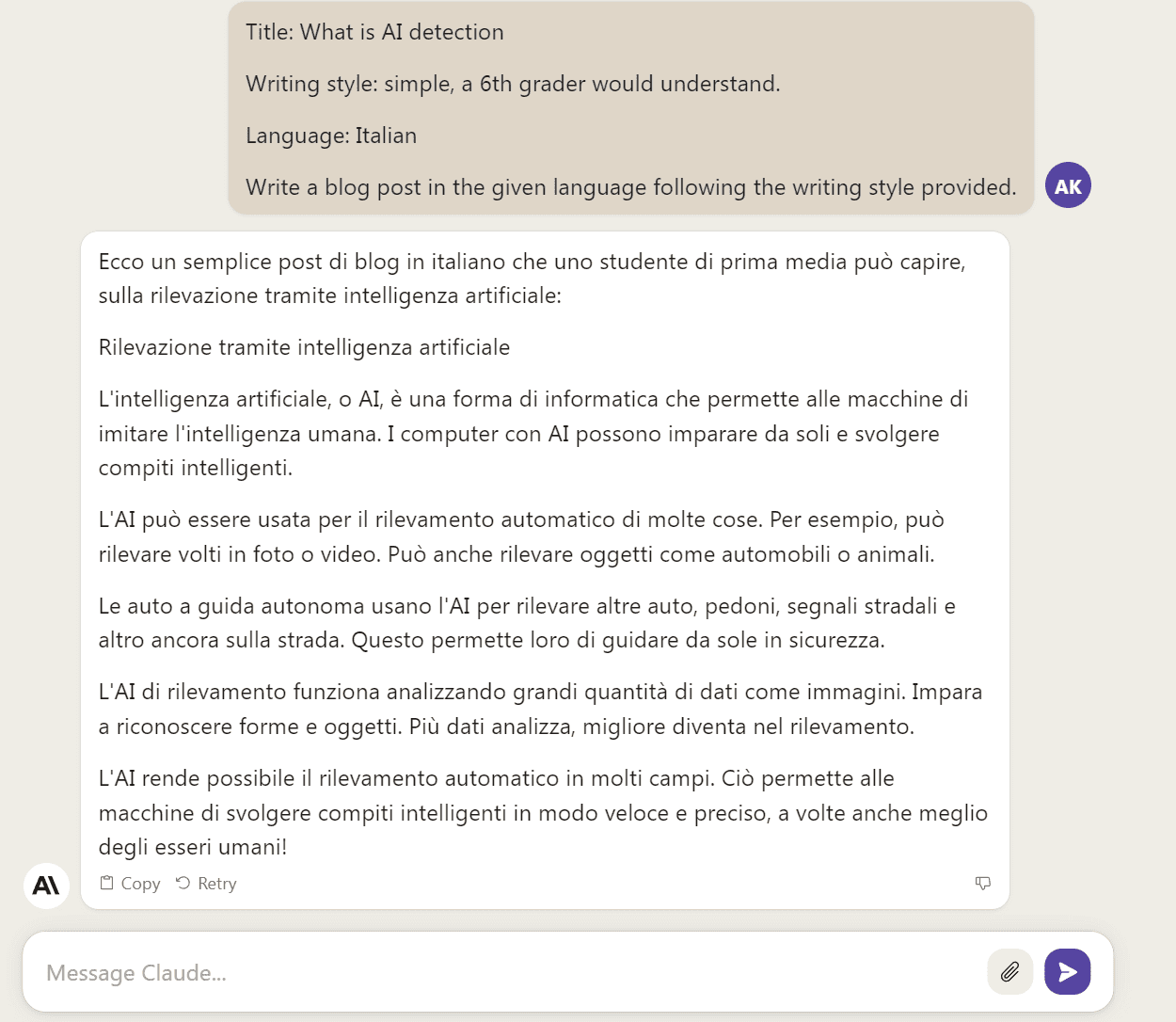

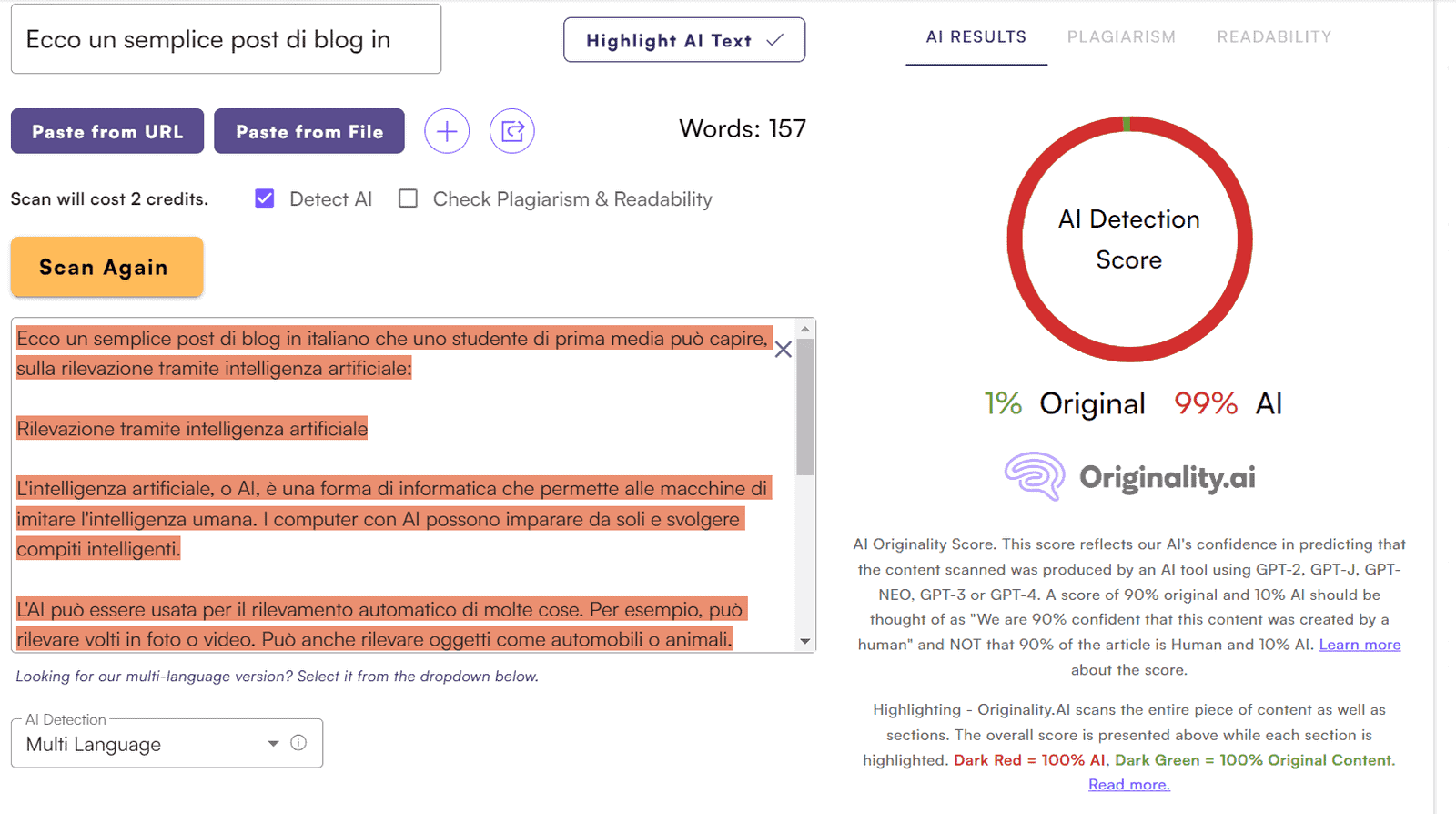

Test 6. Italian

Checking:

Great — 100% AI content.

Claude 2:

Checking Originality AI:

A tiny 1% human score slipped in. No big deal, especially since this is Claude 2 (arguably a more advanced language model than ChatGPT).

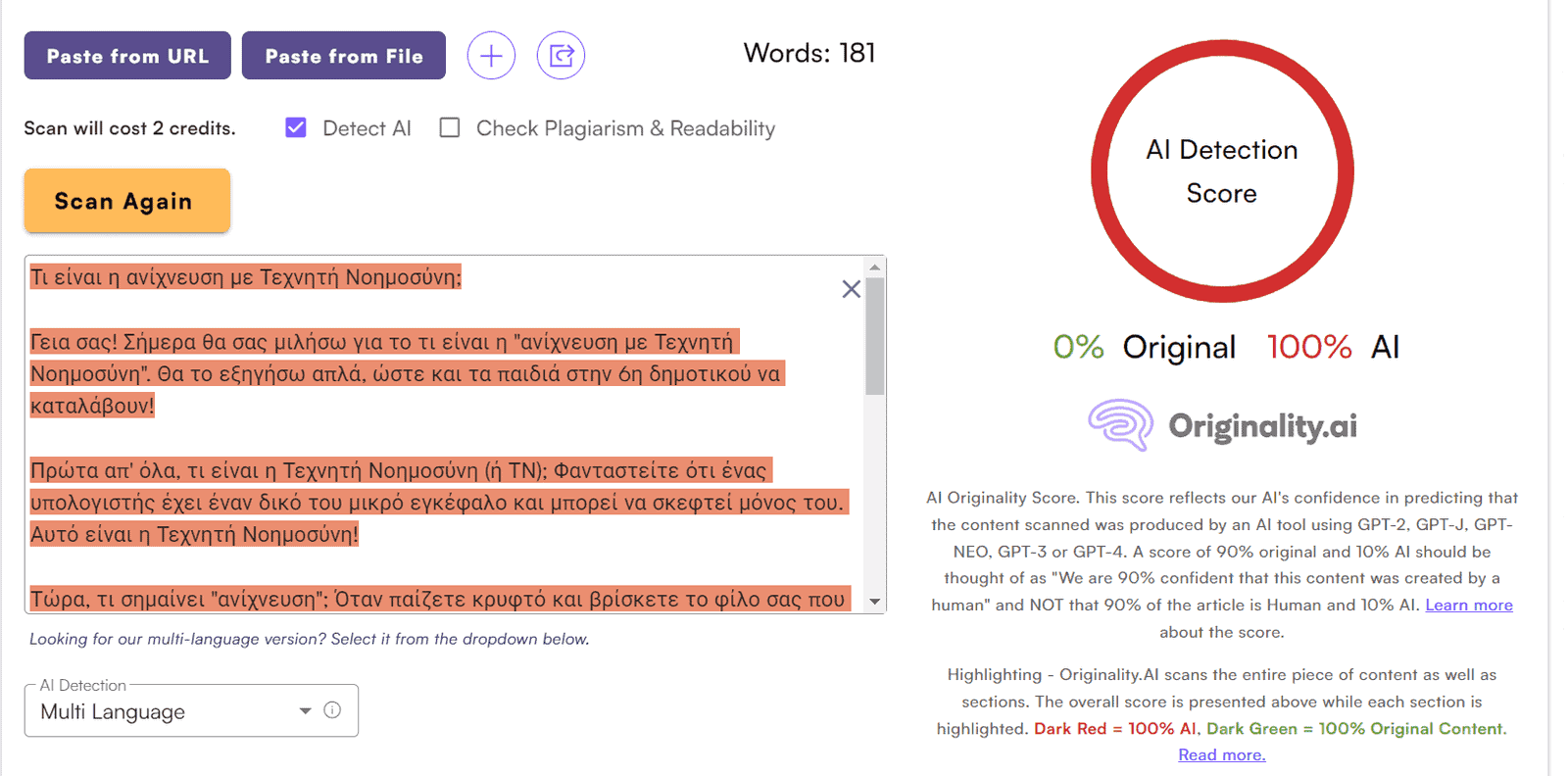

Test 7. Japanese

Not confident Originality AI can handle ideograms, like last time, but let’s see.

ChatGPT:

Checking:

Yup, checked nothing. Still no ideogram capabilities in Originality AI.

Same story for Claude 2 output. Take my word for it.

Test 8. Polish

ChatGPT:

Testing in Originality AI:

Claude 2:

Testing Claude 2 output in Originality AI:

Result: 100% AI content. Boring, I know. Try being me! Hardly anything to get excited about.

Test 9. Russian

ChatGPT:

Checking:

Result: 100% AI.

Claude 2:

Checking in Originality:

Again, 100% AI content.

I speak Russian well. The content from both ChatGPT and Claude 2 is very poor, robotic. ChatGPT did better than Claude2 with Russian actually.

I decided to see if Originality AI could detect a slightly improved (far from perfect) Claude 2 version using my prompt:

Guess what Originality will say?

Well, prompts work in other languages too. Okay, okay, no more messing around, back to testing.

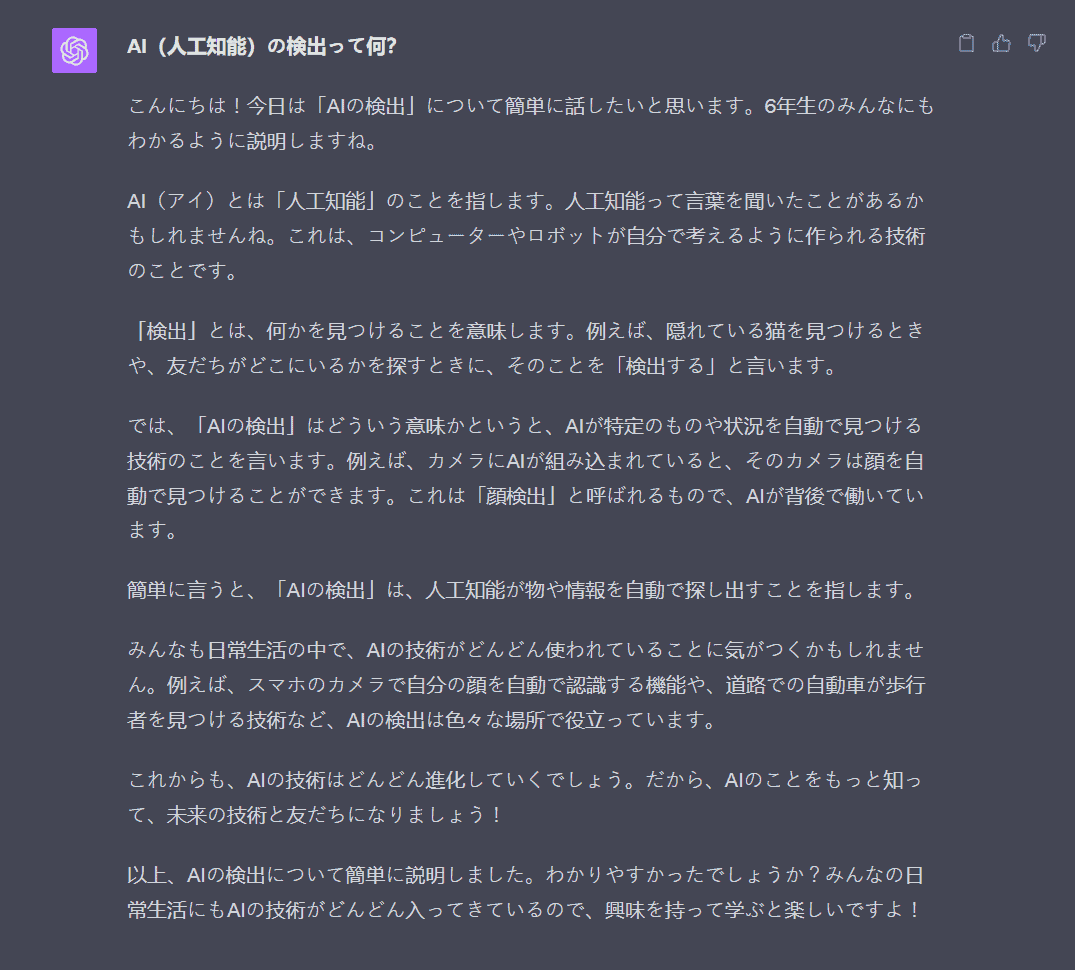

Test 10. Persian

ChatGPT:

Checking in Originality AI:

All good here. 100% AI.

Claude 2:

Checking:

A tiny 1% human language slipped in. No biggie.

Next up is English. I think we all know the deal there, tested extensively. See my Originality.AI review if not.

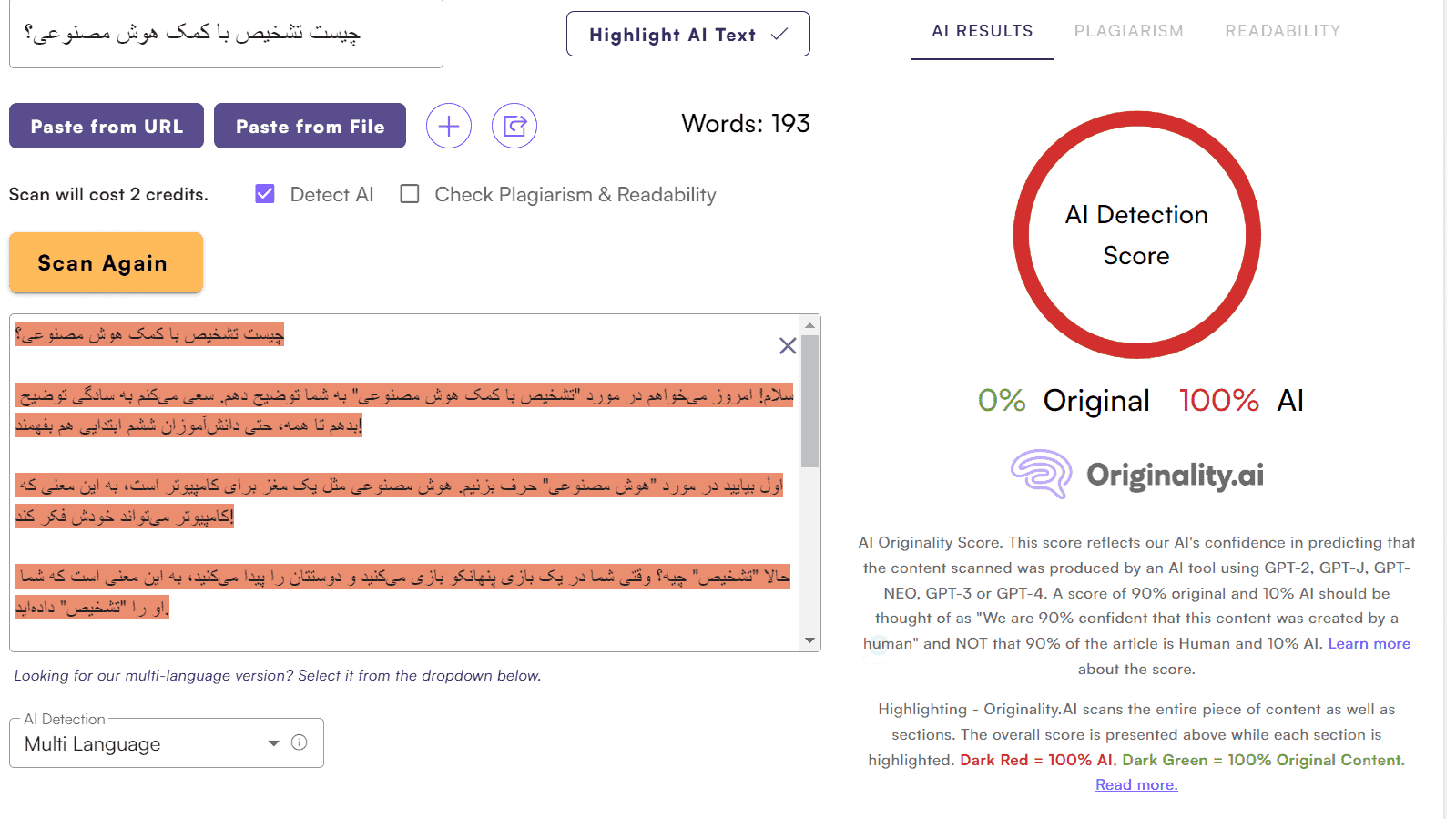

Test 11. Dutch

ChatGPT:

Checking:

Correct — 100% AI content.

Claude 2:

Checking:

0% human content.

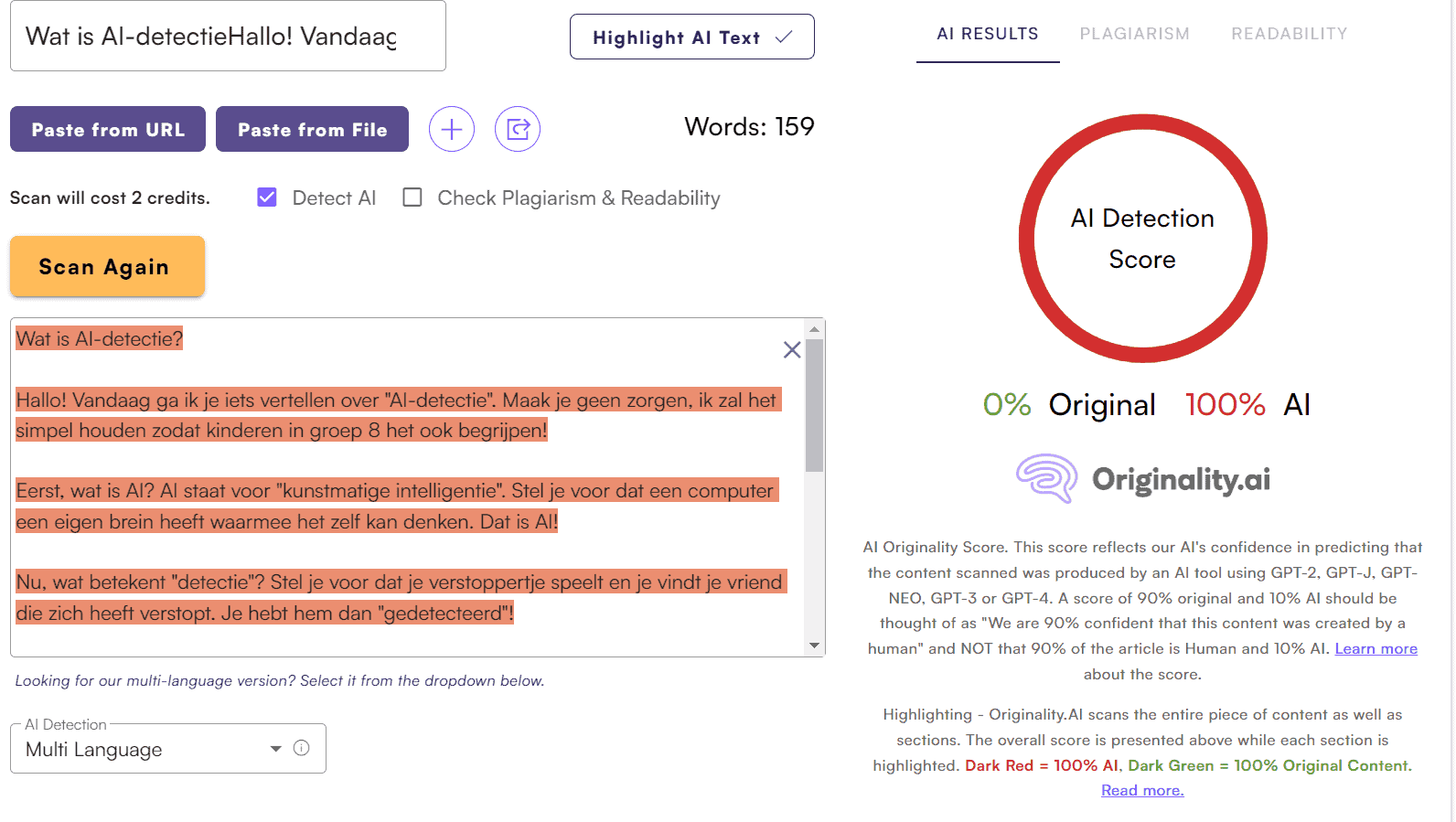

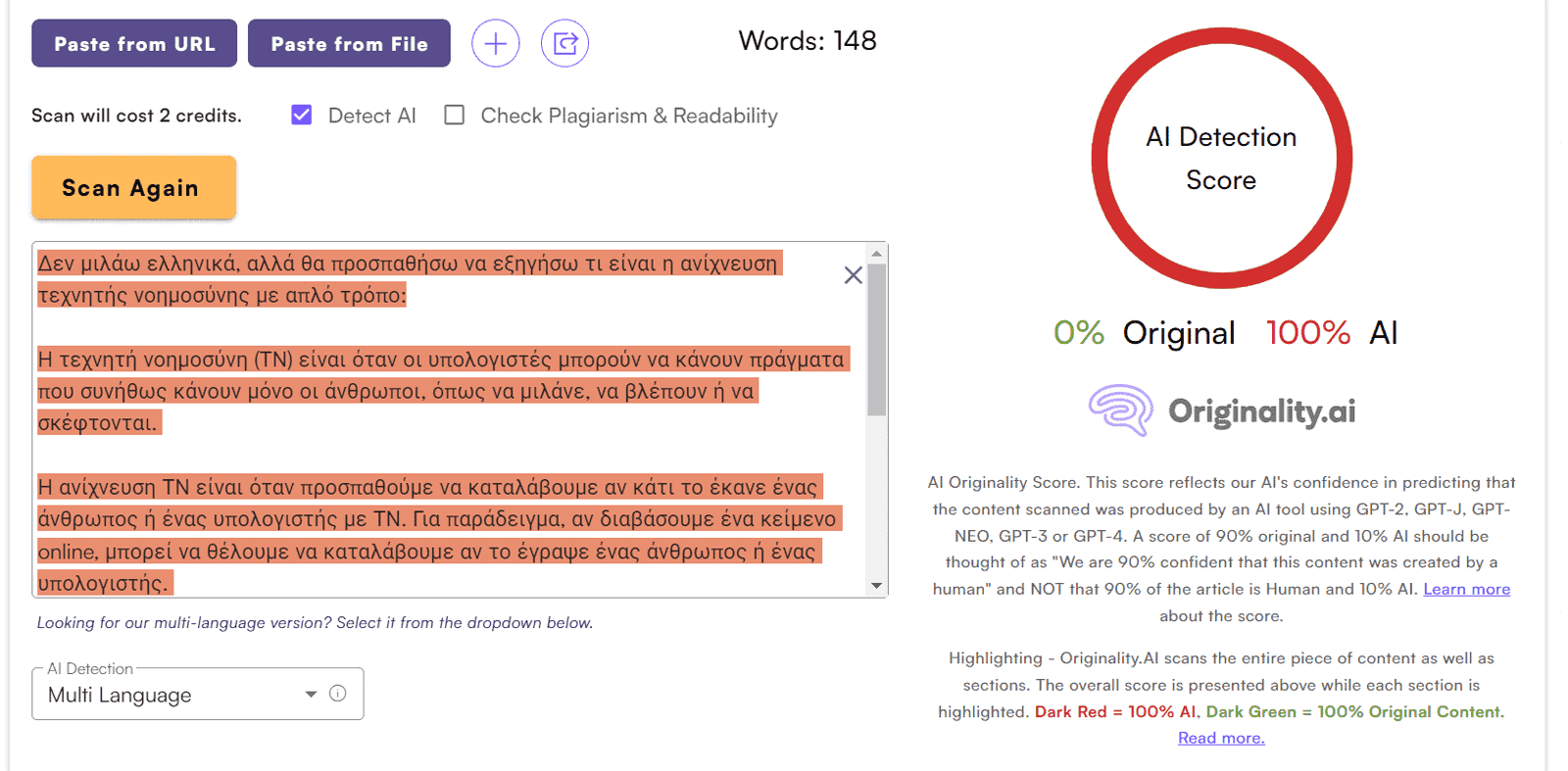

Test 12. Greek

ChatGPT:

Checking in Originality.AI:

100% AI generated content.

Claude 2:

Checking:

0% original content.

Test 13. Portuguese

ChatGPT:

Checking Originality.AI:

0% original content. Boring!

Claude 2:

Checking:

16% original. Ah, Claude2 brought some fun as usual. We know Claude has superior language capabilities over ChatGPT/GPT-4 overall. Finally a speck of excitement!

Last test for today.

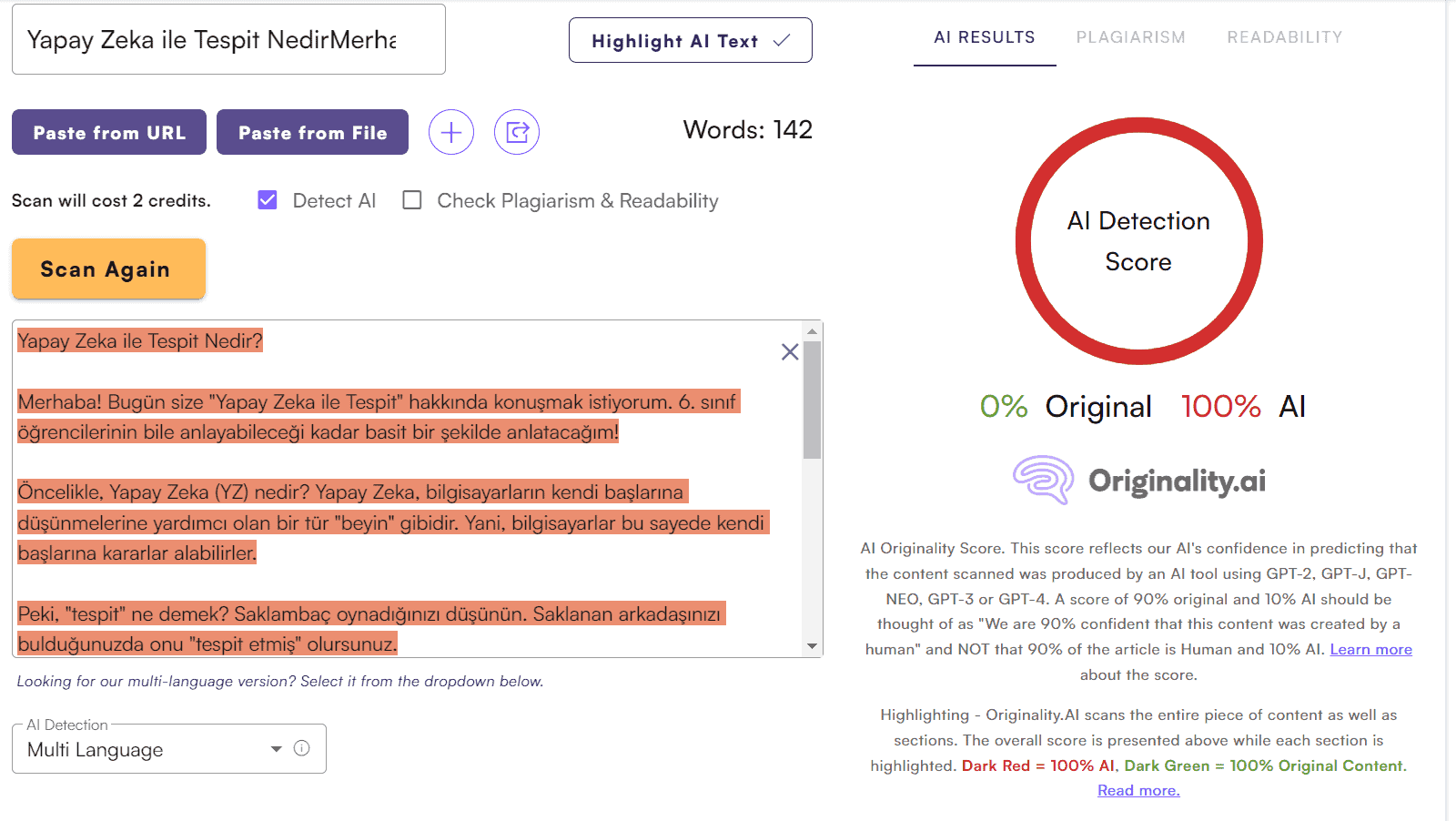

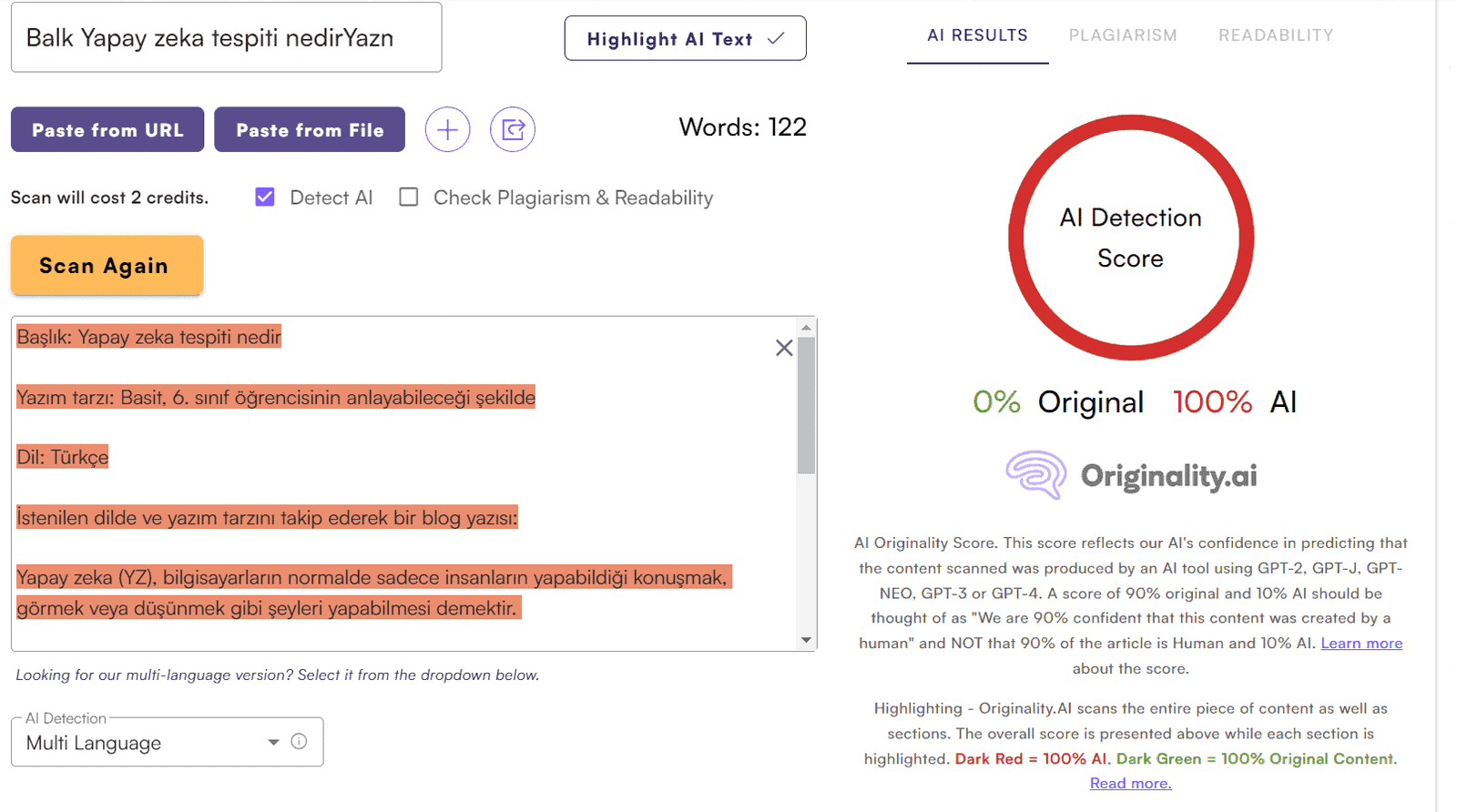

Test 14. Turkish

ChatGPT:

Checking:

Result: 100% AI content.

Claude 2:

Checking:

0% original content, 100% AI.

Breaking Down Barriers: Inside Originality AI’s Multilingual Breakthrough

For creators worldwide, language can limit access to top tools. So Originality AI took a big step for inclusivity. Their new Multilingual Model detects AI content in 13 tongues (keep in mind, Chinese and Japanese do not work, at least yet).

This aims to unite global creators on one platform. And still catch machine-made text.

Surpassing Language Limits

The key? Massive diverse data. Millions of text samples in 13 languages. From human writers and AI like GPT-3.

Training on varied data lets the detector spot AI content in multiple languages now. No easy task!

Impressive Results Across Languages

Extensive tests show it works well in all 13 languages — over 90% accuracy consistently.

It correctly IDs AI content about 95% of the time. Low false positives around 4.5%. Impressive given the complexity.

Refining and Improving

The results are promising. But Originality plans to enhance the model further:

- Expanding the dataset to better detect complex AI like GPT-4

- Trying new deep learning architectures for better performance

- Researching techniques like prompt engineering to handle AI’s tricks

- Optimizing for speed and efficiency

Just the start. Originality aims to unite worldwide creators as language evolves.

Accessing the New Model

The best part? As I said above, no workflow change needed. It spots non-English and switches to Multilingual. Simple!

With this breakthrough, Originality supports creators of all cultures. As AI advances, progress comes by listening to diverse voices.

My Take

Originality AI sticks as my go-to for spotting machine-made text. It reigns supreme with 95% precision even on advanced models like GPT-4.

It neatly tags AI content through color coding and visual hover pop-ups. The team actively nurtures it by rapidly adapting to new developments like Claude or GPT-4.

For everyday use, Originality AI is my trusty tool for maintaining integrity across projects. Its new multilingual model can now catch AI content in 13 languages — a breakthrough for global creators.

Extensive testing shows impressive accuracy across the board. It correctly flags around 95% of AI content with minimal false positives.

There’s always room for improvement. The team plans to expand the dataset and optimize the model. But even now, it’s the undisputed leader.

For both novice and advanced creators, Originality AI sets the gold standard. It empowers integrity in the age of machines. As AI evolves, this detector evolves with it.

| Best For | Content Creators |

| Accuracy | 95% |

| Languages | 13 |

| Price | $30 or $14.99/mo |

Final Thoughts

Few can currently boast AI detection capabilities across multiple languages.

Let's ask Google to be sure:

Just two main results: Copyleaks and Сompilatio.

I discussed Copyleaks in my review of top AI content detection tools. Unfortunately, it proved unreliable. I recommend reading up.

As for Сompilatio, they haven't even launched yet:

However, based on the web archive (they provide the link), they were active before:

Well, we're not playing detective here. They either launched or didn't.

The point is, currently Originality AI has no real competitors for language detection — none.

So if you're writing or checking content in any of the above languages, except Chinese and Japanese, I can confidently recommend Originality.AI.

Yes, their model is version one, but more will come, I'm certain. Even now, it's performing quite well.

Wrap Up

My testing reveals AI tools are shockingly capable at spewing out multilingual content. But thankfully, it's soulless gibberish only a robot could love. For now at least, detecting AI content is still child's play.

Yet given how rapidly AI is evolving, multilingual machine-written content is probably inevitable. For writers watching the AI train barrel toward their jobs, this is utterly terrifying.

But as frightening as AI seems, it also presents tantalizing opportunities to grow our businesses exponentially. With some finesse, could we potentially guide these tools to create decent foreign language content?

My tests show current AI writing is easy for detectors to identify. We still have some time. But we must act quickly—in the AI world, breakthroughs can happen virtually overnight.

Friends, the AI train is leaving the station, and we can't stop it. But we still have a choice: either stubbornly resist and get left behind, or start looking for innovative ways to leverage AI to grow our reach.

FAQ

Will it detect AI-written content in these languages if prompts are used?

Interesting question. Depends how skilled you are with prompt engineering. It can't beat mine. And with Claude 2's abilities, not much special knowledge needed currently (though that may change).

What's Google's view of AI-generated content in other languages?

I'm not Google, but here's my take. First, anything written, AI or not, should be for people only. Second, it's simple — if the content brings value, even your cat could write it and Google would show it.

But I'll note an important nuance I observed: Despite abilities in all the above languages, the AI-generated content is bad. Poor quality. Very robotic. I see why Originality is crunching it like nuts — because it's bad.

For those refining prompts in other languages, note this: Claude and GPT-4 work best in English, as that's the core training language. Prompt engineering is most effective in English. The best quality texts result from English prompts. Content in other languages directly from AI scores 3 out of 5 at best, without refinements.

I think I've addressed all your potential questions, reader. And on that note, I'll wrap up. Whether this ending is sad or happy is up to you. I'll just say: the AI world is just beginning.

About the Author

Meet Alex Kosch, your go-to buddy for all things AI! Join our friendly chats on discovering and mastering AI tools, while we navigate this fascinating tech world with laughter, relatable stories, and genuine insights. Welcome aboard!

KEEP READING, MY FRIEND